This book represents years of practical experience solving real automation challenges. The tools, patterns, and approaches described have been deployed and refined through countless iterations.

This book is written for everyone who wants to truly understand test automation, not just copy-paste code that works "sometimes." Whether you're:

If you've ever wondered:

- "Why does my test pass locally but fail in CI?"

- "What is the browser actually doing when I call click()?"

- "Why can't Selenium find an element that's clearly on the page?"

- "How do websites detect that I'm using automation?"

This book will give you those answers—and more.

Unlike theoretical automation guides that show you the happy path, every chapter in this book is born from real production failures. These aren't academic exercises—they're battle-tested solutions that have been deployed in enterprise environments handling:

"Automation should be invisible."

When automation works perfectly, nobody notices. When it fails, everyone suffers. The goal isn't to write tests—it's to create a safety net that catches defects before they reach production while staying out of everyone's way.

This book embraces three core principles:

This book isn’t just about using tools—it’s about understanding the mechanics behind them. We use a suite of specialized open-source tools as a lens to explore the deep internals of how modern automation actually works:

| Tool | Problem It Solves | Category |

|---|---|---|

| Waitless | Flaky tests from race conditions | Stability |

| Project Vandal | Tests that pass but don't catch bugs | Mutation Testing |

| Selenium Teleport | 10+ seconds per login | Authentication |

| SB Stealth Wrapper | Bot detection blocking tests | Stealth |

| Lumos ShadowDOM | Elements hidden in Shadow DOM | Modern Web |

| Visual Guard | Visual regressions missed by DOM tests | Visual Testing |

| Visual Sonar | Automating remote desktops (no DOM) | RPA |

| Selector Scout | Brittle, constantly-breaking selectors | AI |

| Selenium Chatbot Test | Testing streaming AI interfaces | GenAI |

| Pytest-MockLLM | Testing LLM features without API costs | AI Testing |

| pytest-glow-report | Reports stakeholders can understand | Reporting |

| The Sentinel | Autonomous agentic orchestration | Orchestration |

Start with Part I: Foundations. Don't skip Chapter 1 on browser internals—it will save you countless hours of debugging later. Every mysterious error you'll encounter has roots in concepts explained there.

Recommended path: 1. Part I (Foundations) → Part II (Visual Testing) → Part III (Authentication) 2. Then jump to topics as needed

You can scan Part I quickly, but pay attention to the "Deep Dive" sections. Start with whatever chapter addresses your current pain point, then explore related topics.

Use this as a reference guide. The implementation details in each tool chapter can be directly applied to your frameworks. Pay special attention to Part IX (Enterprise Patterns).

Every chapter includes: - 💡 Key Insight boxes highlighting critical concepts - 🔧 Hands-On Exercise with step-by-step solutions - ⚠️ Common Pitfalls that trip up even experienced developers - 🔍 Deep Dive sections for those who want to understand internals - 📋 Quick Reference summaries you can bookmark

Before diving in, ensure you have:

# Python 3.10+ (recommended: 3.11 or 3.12)

python --version # Should show 3.10+

# pip package manager

pip --version

# Node.js (for some tools)

node --version # 18.0+ recommended

# Git for version control

git --version

# Option 1: Let Selenium Manager handle it (recommended for beginners)

pip install selenium>=4.10.0 # Selenium 4.10+ has built-in driver management

# Option 2: Manual installation

# Download ChromeDriver matching your Chrome version from:

# https://chromedriver.chromium.org/downloads

# Create a dedicated directory

mkdir automation-playbook-labs

cd automation-playbook-labs

# Create virtual environment

python -m venv venv

# Activate it

# Windows:

venv\Scripts\activate

# Mac/Linux:

source venv/bin/activate

# Install base packages

pip install selenium pytest requests

"You cannot truly master a tool until you understand the substrate it operates on."

Before writing a single line of automation code, you need to understand what's actually happening inside the browser. This knowledge separates automation engineers who can debug any problem from those who copy-paste Stack Overflow answers and hope for the best.

Every automation failure you'll ever encounter traces back to something happening inside the browser. When your test fails with:

ElementNotInteractableException — The browser says the element isn't readyStaleElementReferenceException — The DOM changed while you were looking at itTimeoutException — Something took longer than expectedUnderstanding browser internals transforms these from "random failures" into predictable, fixable problems.

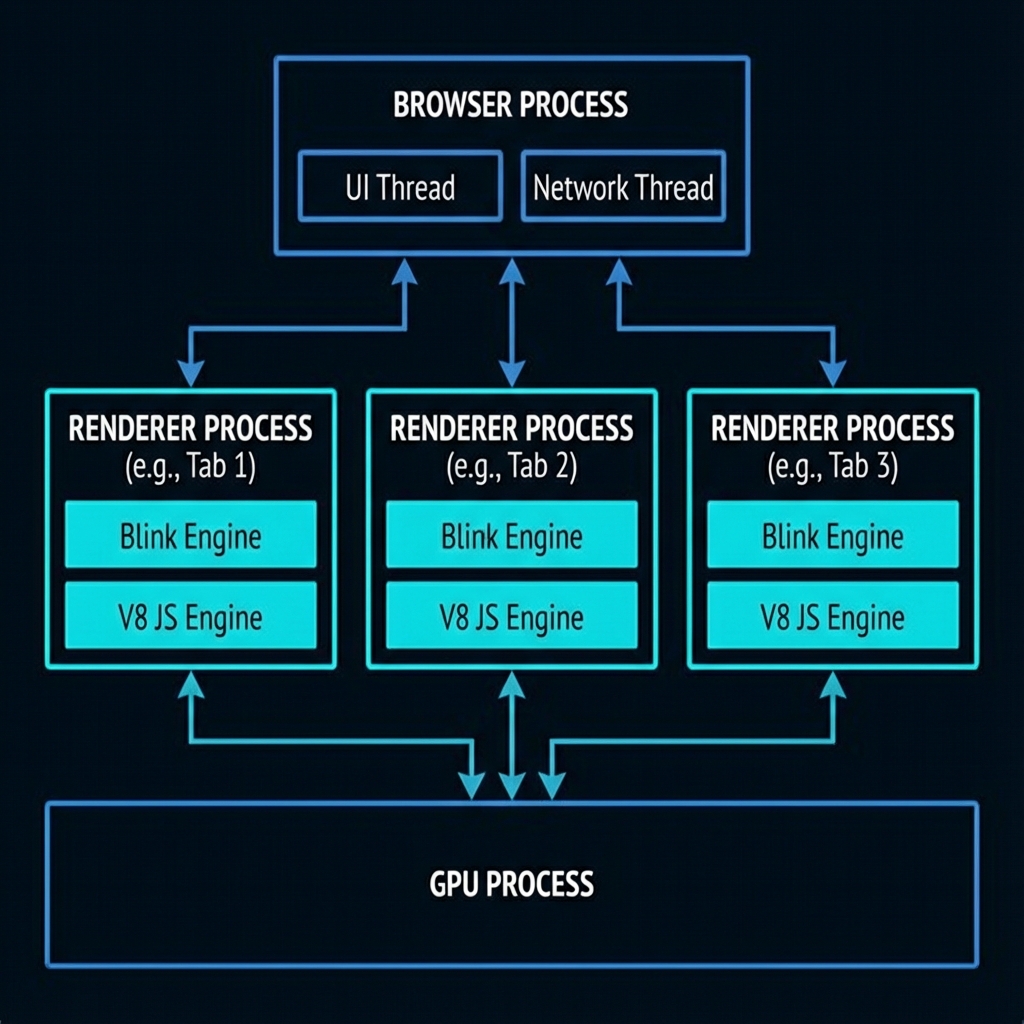

Modern browsers like Chrome are not a single program—they're a collection of processes working together. This architecture was designed for security and stability, but it has profound implications for automation.

┌─────────────────────────────────────────────────────────────────────┐

│ BROWSER PROCESS │

│ • UI (tabs, address bar, bookmarks) │

│ • Network requests │

│ • Storage management │

│ • Inter-Process Communication (IPC) hub │

└────────────┬─────────────────────────────────────────┬──────────────┘

│ │

▼ ▼

┌────────────────────────────┐ ┌────────────────────────────┐

│ RENDERER PROCESS #1 │ │ RENDERER PROCESS #2 │

│ (Tab 1: example.com) │ │ (Tab 2: google.com) │

│ ┌──────────────────────┐ │ │ ┌──────────────────────┐ │

│ │ Blink Engine │ │ │ │ Blink Engine │ │

│ │ • HTML Parser │ │ │ │ • HTML Parser │ │

│ │ • CSS Engine │ │ │ │ • CSS Engine │ │

│ │ • Layout Engine │ │ │ │ • Layout Engine │ │

│ └──────────────────────┘ │ │ └──────────────────────┘ │

│ ┌──────────────────────┐ │ │ ┌──────────────────────┐ │

│ │ V8 JavaScript │ │ │ │ V8 JavaScript │ │

│ │ Engine │ │ │ │ Engine │ │

│ └──────────────────────┘ │ │ └──────────────────────┘ │

🔍 Deep Dive: V8 Bindings & The DOM Bridge

JavaScript doesn't "own" the DOM. The DOM lives in the C++ core of the browser engine (Blink). When you call

document.getElementById(), you're crossing a "bridge" from the JavaScript world (V8) to the C++

world. This context switching is expensive. In high-frequency automation, hundreds of these crossings occur

every second, creating a performance bottleneck that can look like network latency.

└────────────────────────────┘ └────────────────────────────┘

│

▼

┌────────────────────────────┐

│ GPU PROCESS │

│ • Compositing layers │

│ • WebGL rendering │

│ • Video decoding │

└────────────────────────────┘

Key Insight #1: Each tab is isolated

When you open a new browser window or tab in Selenium, you're potentially creating a new renderer process. Cookies, localStorage, and JavaScript state are shared per-origin, but memory and execution are isolated.

Key Insight #2: JavaScript runs in the renderer process

When you call driver.execute_script(), your JavaScript runs in the renderer process—the same place

the website's JavaScript runs. This is why you can access document, window, and the

DOM directly.

Key Insight #3: Network requests go through the browser process

This is why some bot detection techniques work—they can observe network behavior at the browser level, not just at the page level.

When you navigate to a URL, here's what happens inside the browser:

URL Entered → DNS Lookup → TCP Connection → TLS Handshake → HTTP Request → Response

The browser process handles this. The response (HTML, CSS, JS, images) streams back.

<!-- This HTML... -->

<html>

<body>

<div id="container">

<button id="submit">Click Me</button>

</div>

</body>

</html>

<!-- ...becomes this DOM tree -->

Document

└── html

└── body

└── div#container

└── button#submit

└── TextNode: "Click Me"

💡 Key Insight: The DOM is a live tree structure

When you call driver.find_element(By.ID, "submit"), Selenium traverses this tree. If the tree

changes (React re-renders, Ajax loads content), your reference might become "stale."

/* This CSS... */

#container {

display: flex;

}

button {

background: blue;

padding: 10px;

}

/* ...becomes the CSSOM */

StyleSheetList

└── CSSStyleSheet

└── #container { display: flex }

└── button { background: blue; padding: 10px }

The DOM and CSSOM combine to create the Render Tree—only visible elements are included:

RenderTree

└── RenderBody

└── RenderDiv (display: flex)

└── RenderButton (background: blue, padding: 10px)

└── RenderText: "Click Me"

💡 Key Insight: Elements with display: none are NOT in the render tree

This is why element.is_displayed() returns False for hidden elements—they literally

don't exist in the visual representation.

The browser calculates the exact position and size of every element:

button#submit:

x: 150px

y: 200px

width: 80px

height: 40px

⚠️ Common Pitfall: Layout is expensive

Every time the DOM changes, layout might need to recalculate. This is why tests that rapidly modify the DOM can become slow.

Finally, the browser: 1. Paints each layer (turns elements into pixels) 2. Composites layers together (stacking order, transparency) 3. Sends to GPU for display

A common automation mystery: The element is visible, but the click fails. This often happens because of thread contention. The Compositor Thread (which handles scrolling and some animations) is separate from the Main Thread (which handles JS and Layout). If the Main Thread is blocked by a long-running script, the browser can still scroll (compositor), but it cannot process a click (main thread). Automation stability requires the Main Thread to be "idle."

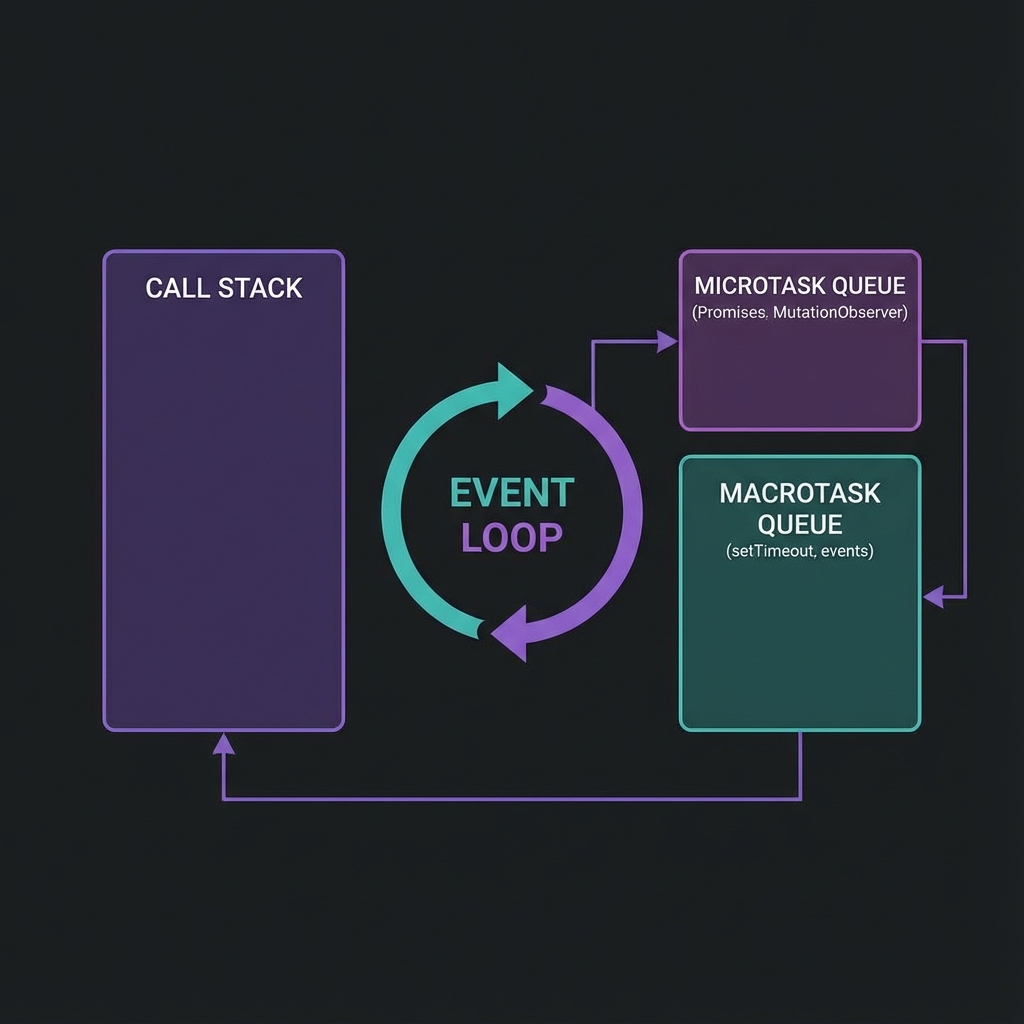

This is the most important concept for understanding flaky tests.

JavaScript in the browser runs on a single thread. There's no true parallelism—everything happens one step at a time. But it doesn't feel single-threaded because of the Event Loop.

┌─────────────────────────────────────────────────────────────────┐

│ EVENT LOOP │

│ │

│ ┌─────────────────┐ ┌────────────────────────────────────┐ │

│ │ Call Stack │ │ Task Queues │ │

│ │ │ │ ┌──────────────────────────────┐ │ │

│ │ Currently │◄───│ │ Macrotask Queue │ │ │

│ │ executing │ │ │ • setTimeout callbacks │ │ │

│ │ code │ │ │ • setInterval callbacks │ │ │

│ │ │ │ │ • I/O operations │ │ │

│ └─────────────────┘ │ │ • UI rendering │ │ │

│ │ └──────────────────────────────┘ │ │

│ │ ┌──────────────────────────────┐ │ │

│ │ │ Microtask Queue │ │ │

│ │ │ • Promise callbacks │ │ │

│ │ │ • MutationObserver │ │ │

│ │ │ • queueMicrotask() │ │ │

│ │ └──────────────────────────────┘ │ │

│ └────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────────┘

// Website code

button.addEventListener('click', async () => {

await fetch('/api/data'); // Network request

updateUI(); // Updates the DOM

});

When your Selenium test clicks the button:

fetch() is called (goes to microtask queue) ✓This is the #1 cause of flaky tests: assuming synchronous completion.

# ❌ BAD: Assumes synchronous completion

driver.find_element(By.ID, "button").click()

result = driver.find_element(By.ID, "result").text # May fail!

# ✅ GOOD: Waits for the expected outcome

driver.find_element(By.ID, "button").click()

WebDriverWait(driver, 10).until(

EC.text_to_be_present_in_element((By.ID, "result"), "Success")

)

┌──────────────────────────────────────────────────────────────────┐

│ PAGE LOADING TIMELINE │

├──────────────────────────────────────────────────────────────────┤

│ │

│ Navigation Start │

│ │ │

│ ▼ │

│ HTML starts downloading ─────────────────────┐ │

│ │ │ │

│ ▼ ▼ │

│ HTML fully parsed ◄─── DOMContentLoaded event fires │

│ │ │

│ ▼ │

│ CSS, JS, Images loading ─────────────────────┐ │

│ │ │ │

│ ▼ ▼ │

│ All resources loaded ◄───── load event fires │

│ │ │

│ ▼ │

│ Lazy resources, AJAX ─────────────────────────────┐ │

│ │ │ │

│ ▼ ▼ │

│ Fully interactive ◄───── NO STANDARD EVENT! │

│ │

└──────────────────────────────────────────────────────────────────┘

| Event | What's Ready | What's NOT Ready |

|---|---|---|

DOMContentLoaded |

HTML parsed, DOM built | CSS, images, fonts, external JS |

load |

All initial resources | Lazy-loaded content, AJAX data |

| (no event) | Everything | — |

Selenium's driver.get(url) waits for the load event by default. But modern single-page

applications often:

load)Your test starts after load, but the UI you need to interact with hasn't rendered yet!

# Strategy 1: Wait for specific elements

driver.get("https://example.com")

WebDriverWait(driver, 20).until(

EC.presence_of_element_located((By.ID, "main-content"))

)

# Strategy 2: Wait for network idle (using JavaScript)

driver.get("https://example.com")

driver.execute_script("""

return new Promise(resolve => {

if (document.readyState === 'complete') {

setTimeout(resolve, 500); // Small buffer for AJAX

} else {

window.addEventListener('load', () => setTimeout(resolve, 500));

}

});

""")

# Strategy 3: Use Waitless (covered in Chapter 3)

from waitless import stabilize

driver = stabilize(webdriver.Chrome())

driver.get("https://example.com") # Automatically waits for full stability

Understanding this communication protocol helps debug "weird" failures.

┌─────────────────┐ HTTP/REST ┌─────────────────┐

│ │─────────────────────│ │

│ Your Test │ {"using": "id", │ ChromeDriver │

│ (Python) │ "value": "btn"} │ (Executable) │

│ │◄────────────────────│ │

└─────────────────┘ {"value": {...}} └────────┬────────┘

│

│ Chrome DevTools

│ Protocol (CDP)

│

┌───────▼────────┐

│ │

│ Chrome │

│ Browser │

│ │

└────────────────┘

find_element()element = driver.find_element(By.ID, "submit-button")

json

POST /session/{sessionId}/element

{"using": "css selector", "value": "#submit-button"}

document.querySelector("#submit-button")json

{"value": {"element-6066-11e4-a52e-4f735466cecf": "abc123"}}

WebElement objectThat "abc123" is a reference ID. If the DOM element is removed and re-added (common

in React/Vue/Angular), the old reference ID is invalid—hence StaleElementReferenceException.

Selenium 4 introduced direct CDP access, unlocking powerful capabilities:

# Traditional WebDriver (limited)

driver.get("https://example.com")

# Direct CDP access (powerful)

driver.execute_cdp_cmd("Network.enable", {})

driver.execute_cdp_cmd("Network.setExtraHTTPHeaders", {

"headers": {"X-Custom-Header": "my-value"}

})

# Listen to network events

driver.execute_cdp_cmd("Network.enable", {})

# Now you can capture all network requests/responses

| Domain | Capabilities |

|---|---|

Network |

Intercept requests, mock responses, throttle bandwidth |

DOM |

Query/modify DOM, track mutations |

Performance |

Collect metrics, trace execution |

Console |

Capture console.log output |

Emulation |

Spoof device, geolocation, timezone |

Every Selenium command (e.g., element.is_displayed()) is an out-of-process network call. Even

on localhost, there is overhead for serialization, network transmission, and deserialization. When you put a

find_element call inside a tight loop, you're not just waiting for the browser; you're paying a

"tax" of 5-10ms per call just for the communication protocol itself. This is why batching interactions in

JavaScript (using execute_script) is often 10x faster for complex tasks.

Let's apply what we've learned:

from selenium import webdriver

from selenium.webdriver.common.by import By

import time

driver = webdriver.Chrome()

# 1. Navigate and observe loading

driver.get("https://example.com")

print(f"Page load complete. Title: {driver.title}")

# 2. Explore the DOM

dom_html = driver.execute_script("return document.documentElement.outerHTML.length")

print(f"Total DOM size: {dom_html} characters")

# 3. Check event loop status

pending_tasks = driver.execute_script("""

return {

readyState: document.readyState,

animationsPending: document.getAnimations().filter(a => a.playState === 'running').length

}

""")

print(f"Document state: {pending_tasks['readyState']}")

print(f"Running animations: {pending_tasks['animationsPending']}")

# 4. Monitor a mutation

driver.execute_script("""

window.__mutations = [];

const observer = new MutationObserver(mutations => {

mutations.forEach(m => window.__mutations.push(m.type));

});

observer.observe(document.body, {childList: true, subtree: true});

""")

# Make a DOM change

driver.execute_script("document.body.innerHTML += '<div>Test</div>'")

mutations = driver.execute_script("return window.__mutations")

print(f"Mutations observed: {mutations}")

driver.quit()

Expected Output:

Page load complete. Title: Example Domain

Total DOM size: 1234 characters

Document state: complete

Running animations: 0

Mutations observed: ['childList']

| Concept | Key Takeaway |

|---|---|

| Multi-Process Architecture | Each tab runs in isolation; crashes don't affect other tabs |

| Rendering Pipeline | HTML → DOM → CSSOM → Render Tree → Layout → Paint |

| Event Loop | Single-threaded but async; explains why clicks don't wait |

| Page Load Events | load event fires before AJAX content is ready |

| WebDriver Protocol | HTTP/JSON commands translated to CDP |

| Stale Elements | DOM changes invalidate element references |

Coming up next: Now that you understand the platform, let's explore why tests fail unpredictably—and how to analyze the root cause.

Flaky tests are the silent killers of automation credibility. A test that passes on Monday, fails on Tuesday, and passes again on Wednesday—with zero code changes—destroys trust faster than any bug in production.

Consider these statistics from real enterprise environments:

| Metric | Impact |

|---|---|

| Developer time investigating false failures | 4-6 hours/week per engineer |

| Pipeline re-runs due to flaky tests | 15-25% of all runs |

| Confidence in test results | Drops below 60% |

| Time to detect real bugs | Increases by 40% |

When developers stop trusting test results, they stop looking at them. The automation suite becomes expensive decoration.

Every flaky test has a deterministic root cause. The test isn't "randomly" failing—it's failing because of specific conditions that aren't always present. Your job is to find those conditions.

Sometimes the browser itself stops responding for a few milliseconds. This happens during Garbage Collection (GC)—when V8 pauses execution to clean up memory—or Just-In-Time (JIT) Compilation. These "micro-stutters" are nearly invisible to humans but can cause a race condition to trigger in automation if the browser pauses exactly when a click is being sent. Stable automation builds in a small buffer for these unavoidable internal pauses.

The browser and test script operate at different speeds. The script says "click the button" before the button is ready.

Symptoms:

- ElementNotInteractableException

- StaleElementReferenceException

- Tests pass locally, fail in CI

Example of the Problem:

# This code has a race condition

driver.get("https://app.example.com/dashboard")

driver.find_element(By.ID, "submit-btn").click() # May fail!

The page is still loading when the click executes. The element might: - Not exist in the DOM yet - Exist but be covered by a loading overlay - Exist but be outside the viewport - Exist but have event handlers not yet attached

🔍 Deep Dive: Why Event Handlers Aren't Attached Immediately

<button id="submit-btn">Submit</button>

<script>

// This runs after the button exists in DOM

document.getElementById('submit-btn').addEventListener('click', handleClick);

</script>

The button is in the DOM immediately, but the event listener is only attached when the

<script> executes. If your test clicks before the script runs, nothing happens!

Solution Pattern:

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

# Wait for element to be clickable (visible + enabled)

wait = WebDriverWait(driver, 10)

button = wait.until(EC.element_to_be_clickable((By.ID, "submit-btn")))

button.click()

API calls complete at different speeds. A dashboard that loads in 200ms locally might take 2 seconds in CI.

Symptoms: - Tests timeout in CI but pass locally - Intermittent failures correlate with time of day - Tests fail more often on shared CI infrastructure

Why CI Is Different:

| Factor | Local Development | CI Environment |

|---|---|---|

| Network Latency | Near-zero (localhost) | Variable (shared infra) |

| CPU | Dedicated | Shared with other jobs |

| Memory | Abundant | Often limited |

| Browser Startup | Instant (browser cached) | Cold start each time |

Solution Pattern:

# ❌ BAD: Fixed timeout too short

wait = WebDriverWait(driver, 3)

# ✅ GOOD: Generous timeout with early exit

wait = WebDriverWait(driver, 30) # Will return early if condition met

element = wait.until(EC.presence_of_element_located((By.ID, "data-loaded")))

CSS transitions and JavaScript animations don't block Selenium. The test clicks where the button will be, not where it is.

Example:

.modal {

transition: transform 0.3s ease-in-out;

transform: translateY(-100%);

}

.modal.open {

transform: translateY(0);

}

The modal slides in over 300ms. Selenium doesn't wait for CSS transitions.

🔍 Deep Dive: How Animations Break Clicks

opacity > 0 and display != none

Solution Pattern:

# Wait for all animations to complete

def wait_for_animations(driver):

driver.execute_script("""

return new Promise(resolve => {

const checkAnimations = () => {

const animations = document.getAnimations();

const running = animations.filter(a => a.playState === 'running');

if (running.length === 0) {

resolve();

} else {

requestAnimationFrame(checkAnimations);

}

};

checkAnimations();

});

""")

# Usage

driver.find_element(By.CLASS_NAME, "open-modal").click()

wait_for_animations(driver)

modal_button = driver.find_element(By.ID, "modal-confirm")

modal_button.click()

Solution Patterns:

# 1. Mock external services

@pytest.fixture

def mock_payment_gateway():

with responses.RequestsMock() as rsps:

rsps.add(

responses.POST,

"https://api.stripe.com/v1/charges",

json={"id": "ch_test123", "status": "succeeded"},

status=200

)

yield rsps

# 2. Isolate database

@pytest.fixture

def isolated_db_session():

# Create unique schema per test

schema_name = f"test_{uuid.uuid4().hex[:8]}"

create_schema(schema_name)

yield schema_name

drop_schema(schema_name)

# 3. Control time

from freezegun import freeze_time

@freeze_time("2024-06-15 14:30:00")

def test_discount_during_sale_period():

# Time-sensitive logic now predictable

assert calculate_discount() == 0.20

Symptoms: - Failures cluster at certain times (when CI is busy) - Memory-related crashes - "Chrome not reachable" errors

Solution Patterns:

# 1. Request dedicated CI resources

# .github/workflows/test.yml

jobs:

test:

runs-on: ubuntu-latest

timeout-minutes: 30

env:

CHROME_ARGS: "--disable-dev-shm-usage --no-sandbox"

# 2. Limit parallel browser instances

# pytest.ini

[pytest]

addopts = -n 4 # Max 4 parallel workers

# 3. Add resource monitoring

import psutil

def check_system_resources():

memory = psutil.virtual_memory()

if memory.percent > 90:

logging.warning(f"High memory usage: {memory.percent}%")

# Consider failing fast rather than flaking

time.sleep() - The Worst Fixdriver.get("https://app.example.com/dashboard")

time.sleep(5) # "This should be enough time"

driver.find_element(By.ID, "submit-btn").click()

Problems: - Too slow: You wait 5 seconds even when the page loads in 500ms - Still flaky: Sometimes 5 seconds isn't enough - Compounds: 100 tests × 3 sleeps each × 5 seconds = 25 minutes wasted

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

wait = WebDriverWait(driver, 10)

element = wait.until(

EC.element_to_be_clickable((By.ID, "submit-btn"))

)

element.click()

Improvements: - Returns as soon as condition is met - Has a timeout for failure cases - More descriptive failures

Remaining Problems: - Doesn't wait for network calls to complete - Doesn't wait for animations to finish - Doesn't detect JavaScript-driven readiness - Requires explicit waits everywhere in code

class all_animations_complete:

def __call__(self, driver):

return driver.execute_script("""

return document.getAnimations().every(a =>

a.playState === 'finished' || a.playState === 'idle'

);

""")

wait.until(all_animations_complete())

Problems: - Must remember to add this everywhere - Doesn't handle network calls - Complex to maintain across team

Before jumping to solutions, you need to diagnose the specific cause of flakiness.

Run the flaky test 50+ times and analyze patterns:

# pytest_flaky_tracker.py

import pytest

import json

from datetime import datetime

RESULTS_FILE = "flaky_analysis.json"

@pytest.hookimpl(hookwrapper=True)

def pytest_runtest_makereport(item, call):

outcome = yield

report = outcome.get_result()

if call.when == "call":

result = {

"test": item.name,

"timestamp": datetime.now().isoformat(),

"passed": report.passed,

"duration": report.duration,

"error": str(report.longrepr) if report.failed else None

}

# Append to results file

try:

with open(RESULTS_FILE, "r") as f:

data = json.load(f)

except FileNotFoundError:

data = []

data.append(result)

with open(RESULTS_FILE, "w") as f:

json.dump(data, f, indent=2)

Run analysis:

for i in {1..50}; do pytest test_flaky.py --tb=short; done

python analyze_flaky.py # Analyze patterns

@pytest.fixture

def driver(request):

driver = webdriver.Chrome()

yield driver

# Capture screenshot on failure

if request.node.rep_call.failed:

screenshot_dir = Path("screenshots")

screenshot_dir.mkdir(exist_ok=True)

filename = f"{request.node.name}_{datetime.now():%Y%m%d_%H%M%S}.png"

driver.save_screenshot(str(screenshot_dir / filename))

driver.quit()

@pytest.hookimpl(tryfirst=True, hookwrapper=True)

def pytest_runtest_makereport(item, call):

outcome = yield

rep = outcome.get_result()

setattr(item, f"rep_{rep.when}", rep)

def enable_network_logging(driver):

driver.execute_cdp_cmd("Network.enable", {})

def get_pending_requests(driver):

# This requires more complex CDP subscription setup

logs = driver.execute_script("""

return window.performance.getEntriesByType('resource')

.filter(e => e.responseEnd === 0)

.map(e => e.name);

""")

return logs

| Flakiness Category | Percentage | Primary Solution |

|---|---|---|

| Race Conditions | 40% | Explicit waits for expected state |

| Network Variability | 25% | Generous timeouts, network mocking |

| Animation Interference | 15% | Wait for getAnimations() to settle |

| External Dependencies | 10% | Mock services, isolate data |

| Resource Contention | 10% | Dedicated resources, monitoring |

The Ultimate Solution: In the next chapter, we'll introduce Waitless, which addresses race conditions, network variability, AND animation interference automatically—with no code changes required.

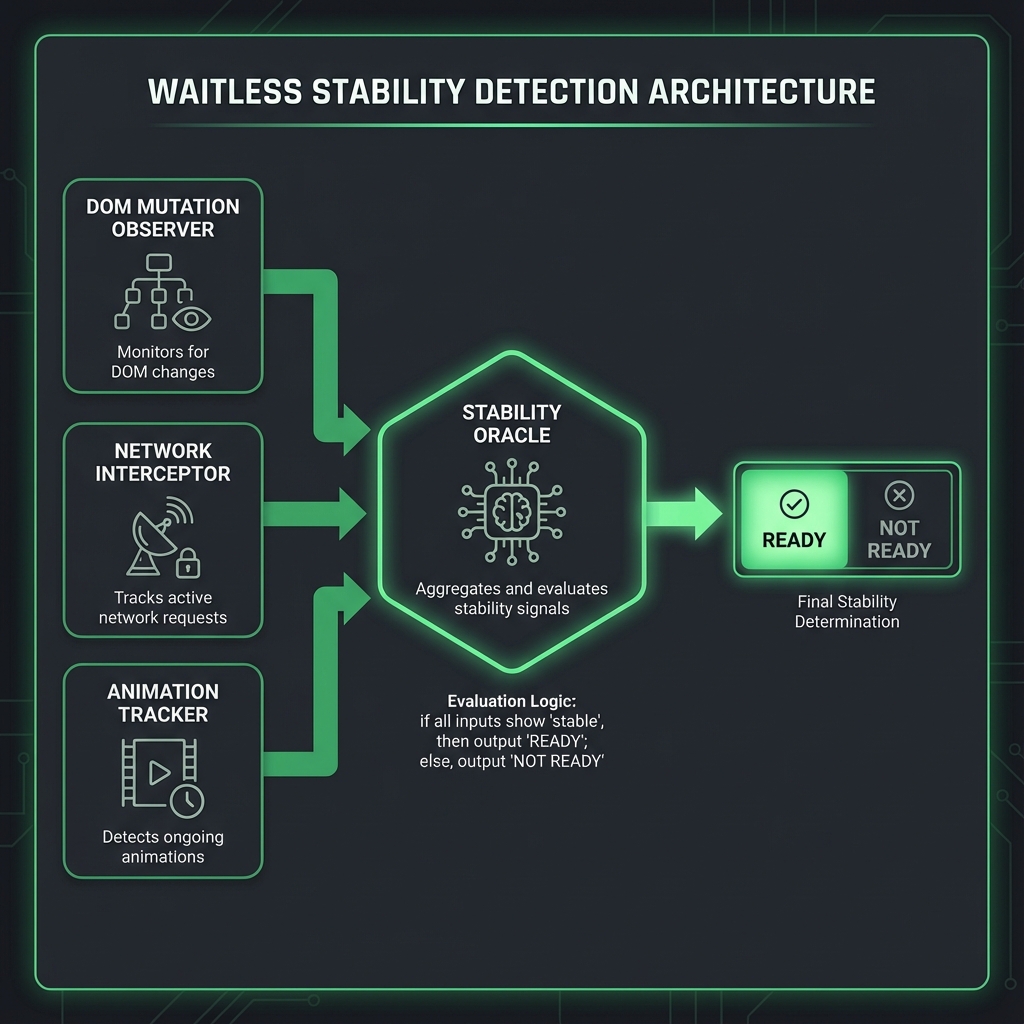

Instead of telling Selenium when to wait, Waitless instruments the browser itself to detect when waiting is no longer needed.

Traditional approaches ask: "What should I wait for?"

Waitless asks: "What signals indicate the page is stable?"

The four signals of stability: 1. DOM settled — No elements being added/removed/modified 2. Network idle — No pending HTTP requests 3. Animations complete — No CSS or JavaScript animations running 4. Shadow Elements detected — Full support for encapsulated Shadow DOM stability

When all three are true, the page is ready for interaction.

┌─────────────────────────────────────────────────────────────────┐

│ YOUR TEST CODE │

│ driver.find_element(By.ID, "btn").click() │

└─────────────────────────────────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ WAITLESS WRAPPER │

│ • Intercepts all WebDriver commands │

│ • Injects stability checks before each action │

│ • No code changes required in your tests │

└─────────────────────────────────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ BROWSER-SIDE INSTRUMENTATION │

│ ┌─────────────────┐ ┌─────────────────┐ ┌─────────────────┐ │

│ │ Mutation │ │ Network │ │ Animation │ │

│ │ Observer │ │ Interceptor │ │ Tracker │ │

│ │ │ │ │ │ │ │

│ │ Watches DOM │ │ Tracks XHR & │ │ Monitors CSS & │ │

│ │ changes via │ │ Fetch pending │ │ JS animations │ │

│ │ MutationObserver│ │ requests │ │ │ │

│ └─────────────────┘ └─────────────────┘ └─────────────────┘ │

│ │ │

│ ▼ │

│ STABILITY ORACLE │

│ "Is everything settled? Yes → Proceed" │

└─────────────────────────────────────────────────────────────────┘

pip install waitless

from selenium import webdriver

from waitless import stabilize

# Wrap your driver once at the start

driver = stabilize(webdriver.Chrome())

# All subsequent commands automatically wait for stability

driver.get("https://app.example.com")

driver.find_element(By.ID, "dynamic-button").click() # Stable!

driver.find_element(By.ID, "ajax-loaded-element").send_keys("text") # Stable!

That's it. No explicit waits. No sleep statements. The driver automatically waits for stability before every action. New in v0.3.0+: Waitless now natively supports Shadow DOM, ensuring stability even within encapsulated web components without extra configuration.

The MutationObserver API watches for changes to the DOM tree:

// Injected by Waitless into the browser

(function() {

window.__waitless_last_mutation = Date.now();

window.__waitless_mutation_count = 0;

const observer = new MutationObserver((mutations) => {

window.__waitless_mutation_count += mutations.length;

window.__waitless_last_mutation = Date.now();

});

observer.observe(document.body, {

childList: true, // Watch for added/removed elements

subtree: true, // Watch entire DOM tree

attributes: true, // Watch attribute changes

characterData: true // Watch text content changes

});

})();

Stability Criteria: No mutations for 100ms (configurable).

Why 100ms? React, Vue, and Angular typically batch multiple DOM updates within a single animation frame (16ms). The 100ms buffer ensures all related updates have completed.

Waitless intercepts XMLHttpRequest and fetch to track pending requests:

// XMLHttpRequest interception

(function() {

window.__waitless_pending_requests = 0;

const originalXHROpen = XMLHttpRequest.prototype.open;

const originalXHRSend = XMLHttpRequest.prototype.send;

XMLHttpRequest.prototype.open = function(...args) {

this.__waitless_url = args[1];

return originalXHROpen.apply(this, args);

};

XMLHttpRequest.prototype.send = function(...args) {

window.__waitless_pending_requests++;

this.addEventListener('loadend', () => {

window.__waitless_pending_requests--;

});

return originalXHRSend.apply(this, args);

};

// Fetch interception

const originalFetch = window.fetch;

window.fetch = function(...args) {

window.__waitless_pending_requests++;

return originalFetch.apply(this, args)

.finally(() => {

window.__waitless_pending_requests--;

});

};

})();

Stability Criteria: Zero pending requests.

Tracks CSS animations and JavaScript animations:

(function() {

window.__waitless_active_animations = 0;

// CSS Animation tracking

document.addEventListener('animationstart', () => {

window.__waitless_active_animations++;

});

document.addEventListener('animationend', () => {

window.__waitless_active_animations--;

});

document.addEventListener('transitionstart', () => {

window.__waitless_active_animations++;

});

document.addEventListener('transitionend', () => {

window.__waitless_active_animations--;

});

})();

// Web Animations API check

const getActiveAnimations = () => {

return document.getAnimations().filter(a =>

a.playState === 'running'

).length;

};

Stability Criteria: Zero running animations.

from waitless import stabilize, StabilityConfig

config = StabilityConfig(

# Timing controls

mutation_settle_time=100, # ms to wait after last DOM change

network_idle_time=50, # ms to wait after last network request

animation_settle_time=50, # ms to wait after last animation

# Safety limits

max_wait_time=30000, # maximum wait time in ms

poll_interval=50, # how often to check stability

# URL filtering (ignore analytics, monitoring, etc.)

ignore_urls=[

r".*google-analytics.*",

r".*googletagmanager.*",

r".*hotjar.*",

r".*sentry.*",

r".*datadog.*"

]

)

driver = stabilize(webdriver.Chrome(), config=config)

| Scenario | Adjustment |

|---|---|

| Very slow API responses | Increase max_wait_time |

| Complex animations | Increase animation_settle_time |

| Analytics causing false positives | Add URLs to ignore_urls |

| Need faster feedback | Decrease poll_interval (use cautiously) |

When a test still fails, Waitless provides diagnostic information:

from waitless import get_diagnostics

# After a test failure, check what was unstable

diagnostics = get_diagnostics(driver)

print(f"Pending network requests: {diagnostics['pending_requests']}")

print(f"Last mutation age: {diagnostics['ms_since_last_mutation']}ms")

print(f"Active animations: {diagnostics['active_animations']}")

print(f"Blocking URLs: {diagnostics['blocking_urls']}")

Example output for a failing test:

Pending network requests: 2

Last mutation age: 15ms

Active animations: 0

Blocking URLs: ['/api/user/profile', '/api/notifications']

This tells you the page wasn't stable because two API calls were still pending.

Before Waitless:

Test Suite: checkout_flow (5 tests)

├── test_add_to_cart PASSED

├── test_update_quantity FAILED (StaleElementReference)

├── test_apply_coupon PASSED

├── test_checkout FAILED (ElementNotInteractable)

└── test_payment PASSED

Run 1: 60% pass rate

Run 2: 80% pass rate

Run 3: 40% pass rate

Average: 60% (Expected: 100%)

Re-runs required: 3-4 per CI pipeline

After Waitless:

Test Suite: checkout_flow (5 tests)

├── test_add_to_cart PASSED

├── test_update_quantity PASSED

├── test_apply_coupon PASSED

├── test_checkout PASSED

└── test_payment PASSED

Run 1-100: 100% pass rate

Flaky failures eliminated: 100%

Re-runs required: 0

If your app uses long-polling or maintains open WebSocket connections, Waitless may wait indefinitely for "network idle."

Solution:

config = StabilityConfig(

ignore_urls=[

r".*socket.*",

r".*poll.*",

r".*long-poll.*"

]

)

Loading spinners and animated backgrounds never "complete."

Solution:

# Waitless ignores animations on elements with these classes

config = StabilityConfig(

ignore_animation_selectors=[

".loading-spinner",

".animated-background",

"[data-infinite-animation]"

]

)

Google Analytics, Hotjar, and other analytics tools make constant requests.

Solution:

config = StabilityConfig(

ignore_urls=[

r".*google-analytics.*",

r".*googletagmanager.*",

r".*hotjar.*",

r".*segment.*"

]

)

Create a test that demonstrates Waitless in action:

from selenium import webdriver

from selenium.webdriver.common.by import By

from waitless import stabilize, StabilityConfig, get_diagnostics

import time

# Setup with custom config

config = StabilityConfig(

mutation_settle_time=200, # Extra buffer for this exercise

max_wait_time=10000

)

driver = stabilize(webdriver.Chrome(), config=config)

try:

# Test 1: Dynamic content loading

driver.get("https://jsonplaceholder.typicode.com/")

# By the time this line runs, the page is guaranteed stable

title = driver.find_element(By.TAG_NAME, "h1").text

print(f"✓ Page loaded. Title: {title}")

# Check what Waitless detected

diagnostics = get_diagnostics(driver)

print(f" Stability achieved in {diagnostics['total_wait_time']}ms")

# Test 2: Navigating to a different page

driver.get("https://httpbin.org/html")

content = driver.find_element(By.TAG_NAME, "p").text[:50]

print(f"✓ Second page loaded. Content preview: {content}...")

print("\n✅ All tests passed with automatic stability detection!")

finally:

driver.quit()

| Feature | Benefit |

|---|---|

| Automatic stability detection | No manual waits needed |

| DOM mutation tracking | Catches React/Vue re-renders |

| Network request tracking | Waits for AJAX to complete |

| Animation awareness | Prevents mid-animation clicks |

| Zero code changes | Works with existing tests |

| Configurable thresholds | Tune for your app's behavior |

Key Takeaway: Waitless shifts the waiting logic from your tests to the browser itself. Instead of guessing when to wait, it observes actual browser activity and proceeds only when truly stable.

Coming up next: Now that we've eliminated flakiness in functional tests, let's tackle visual testing—because a button working isn't the same as a button looking right.

"A user doesn't interact with the DOM—they interact with pixels. Test accordingly."

Functional tests verify that buttons click and forms submit. They don't verify that: - The button is visible and properly styled - Text is readable (not white on white) - Layout hasn't shifted unexpectedly - Mobile responsive design still works - CSS didn't accidentally hide critical elements

A user sees pixels, not DOM elements. Visual testing validates what users actually experience.

| Bug Type | Functional Test Result | User Experience |

|---|---|---|

| Button moved 200px left | ✅ PASS (still clickable) | "Where's the buy button?" |

| Text color matches background | ✅ PASS (text exists in DOM) | "I can't read anything!" |

| Modal renders behind overlay | ✅ PASS (elements exist) | "The site is frozen!" |

| Font failed to load | ✅ PASS (text displays) | "This looks unprofessional" |

| Mobile layout broken | ✅ PASS (desktop tested) | 50% of users can't use site |

Traditional visual testing compares screenshots pixel-by-pixel:

# Naive approach

from PIL import ImageChops

def compare_images(baseline, current):

diff = ImageChops.difference(baseline, current)

return diff.getbbox() is None # True if identical

Problems with this approach: - Anti-aliasing differences across browsers/OS - Sub-pixel rendering variations - Font smoothing differences (Mac vs Windows) - System clock visible in screenshots - Dynamic content (usernames, timestamps) - Result: Constant false positives

Two images can be: - Pixel-different but visually identical (anti-aliasing variations) - Pixel-similar but visually different (subtle color shift across entire page)

We need algorithms that match human perception, not bit comparison.

Visual Guard uses perceptual comparison algorithms that mimic how humans see:

SSIM doesn't compare pixels—it compares the structure of images:

SSIM analyzes three components:

1. Luminance (l) - Overall brightness comparison

2. Contrast (c) - Variation in brightness

3. Structure (s) - Patterns and edges

Final Score = l^α × c^β × s^γ

Where α, β, γ are weights (typically all 1)

🔍 Deep Dive: How SSIM Works

┌─────────────────────────────────────────────────────────────────┐

│ SSIM COMPARISON PROCESS │

├─────────────────────────────────────────────────────────────────┤

│ │

│ BASELINE IMAGE CURRENT IMAGE │

│ ┌─────────────────┐ ┌─────────────────┐ │

│ │ ░░░░░░░░░░░░░░ │ │ ░░░░░░░░░░░░░░ │ │

│ │ ░░▓▓▓▓▓▓▓▓░░░░ │ │ ░░▓▓▓▓▓▓▓▓░░░░ │ │

│ │ ░░▓▓▓▓▓▓▓▓░░░░ │ │ ░░▓▓▓▓▓▓▓▓░░░░ │ │

│ │ ░░░░░░░░░░░░░░ │ │ ░░░░░░░░░░░░░░ │ │

│ └─────────────────┘ └─────────────────┘ │

│ │ │ │

│ └──────────┬───────────────┘ │

│ ▼ │

│ ┌─────────────────────┐ │

│ │ For each window: │ │

│ │ • Mean (μ) │ │

│ │ • Variance (σ²) │ │

│ │ • Covariance (σxy) │ │

│ └─────────────────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────────┐ │

│ │ Calculate SSIM: │ │

│ │ (2μxμy + C1)(2σxy + C2) │

│ │ ───────────────────────── │

│ │ (μx² + μy² + C1)(σx² + σy² + C2) │

│ └─────────────────────┘ │

│ │ │

│ ▼ │

│ SSIM Score: 0.97 │

│ (1.0 = identical, 0.0 = completely different) │

│ │

└─────────────────────────────────────────────────────────────────┘

Why SSIM is better for visual testing: - Ignores minor anti-aliasing differences (structure preserved) - Detects layout shifts (structure changed) - Handles slight color variations gracefully - Matches human perception of "same" vs "different"

pHash creates a "fingerprint" of the image that's resistant to minor changes:

# How pHash works conceptually

def perceptual_hash(image):

# 1. Resize to small square (32x32)

small = resize(image, 32, 32)

# 2. Convert to grayscale

gray = grayscale(small)

# 3. Apply Discrete Cosine Transform (DCT)

dct = discrete_cosine_transform(gray)

# 4. Keep only low-frequency components (top-left 8x8)

low_freq = dct[:8, :8]

# 5. Compute average (excluding DC component)

avg = average(low_freq[1:])

# 6. Create hash: 1 if above average, 0 if below

hash = "".join("1" if v > avg else "0" for v in low_freq.flat)

return hash # 64-bit string like "1010110010..."

Comparing with pHash:

# Hamming distance = number of different bits

hash1 = "10101100..." # Baseline

hash2 = "10101110..." # Current (only 1 bit different)

hamming_distance = 1 # Very similar!

# Threshold: distance < 10 means same image

pip install visual-guard

from selenium import webdriver

from visualguard import VisualGuard

driver = webdriver.Chrome()

driver.get("https://example.com")

# Initialize Visual Guard

guard = VisualGuard(

baseline_dir="./baselines",

comparison_method="ssim",

threshold=0.95 # 95% similarity required to pass

)

# Capture current state

guard.capture("homepage", driver)

# Compare against baseline (creates baseline if first run)

result = guard.compare("homepage")

if not result.passed:

print(f"Visual regression detected!")

print(f"Similarity score: {result.similarity:.2%}")

print(f"Difference regions: {result.diff_regions}")

result.save_diff_image("./reports/homepage_diff.png")

else:

print(f"Visual check passed! Similarity: {result.similarity:.2%}")

driver.quit()

Real applications have dynamic content: timestamps, usernames, advertisements. Visual Guard provides region masking:

from visualguard import VisualGuard, MaskRegion

guard = VisualGuard(baseline_dir="./baselines")

# Define regions to ignore during comparison

masks = [

# By coordinates (x, y, width, height)

MaskRegion(name="header_time", x=800, y=10, width=150, height=30),

# By CSS selector (element found and masked automatically)

MaskRegion(name="user_avatar", selector="#user-avatar"),

MaskRegion(name="ad_banner", selector=".advertisement"),

MaskRegion(name="timestamp", selector="[data-testid='timestamp']"),

]

result = guard.compare("dashboard", masks=masks)

Don't know what's dynamic? Let Visual Guard figure it out:

from visualguard import discover_dynamic_regions

# Load the same page 3 times, detect what changes

dynamic_regions = discover_dynamic_regions(

driver,

url="https://app.example.com/dashboard",

runs=3,

interval_seconds=2

)

print(f"Detected {len(dynamic_regions)} dynamic regions:")

for region in dynamic_regions:

print(f" {region.selector}: changes {region.change_frequency}% of the time")

# Output:

# Detected 3 dynamic regions:

# #notification-count: changes 100% of the time

# .timestamp: changes 100% of the time

# .chart-tooltip: changes 67% of the time

from visualguard import VisualGuard, BrowserConfig

# Define all browser/viewport combinations to test

configurations = [

# Desktop browsers

BrowserConfig(browser="chrome", width=1920, height=1080),

BrowserConfig(browser="firefox", width=1920, height=1080),

BrowserConfig(browser="edge", width=1920, height=1080),

# Mobile viewports (using Chrome)

BrowserConfig(browser="chrome", width=375, height=812, device="iPhone 12"),

BrowserConfig(browser="chrome", width=414, height=896, device="iPhone 11"),

BrowserConfig(browser="chrome", width=768, height=1024, device="iPad"),

]

guard = VisualGuard(baseline_dir="./baselines/multi-browser")

for config in configurations:

driver = create_driver_for_config(config)

driver.get("https://example.com")

# Each config gets its own baseline

capture_name = f"homepage_{config.browser}_{config.width}x{config.height}"

guard.capture(capture_name, driver)

result = guard.compare(capture_name)

print(f"{capture_name}: {'PASS' if result.passed else 'FAIL'}")

driver.quit()

For pages with scroll:

from visualguard import VisualGuard, FullPageMode

guard = VisualGuard(

baseline_dir="./baselines",

full_page_mode=FullPageMode.SCROLL_STITCH # Scroll and stitch screenshots

)

# Captures entire page, not just viewport

guard.capture("long_page", driver)

from visualguard import generate_report

# After running all visual tests

generate_report(

results_dir="./visual_results",

output_file="./reports/visual_regression.html",

include_thumbnails=True,

side_by_side=True,

diff_highlighting=True

)

The report includes: - Thumbnail grid of all comparisons - Side-by-side baseline vs. current - Difference highlighting overlay (pink/red shows changes) - Filtering by status (passed/failed) - One-click baseline update for intentional changes

"""

Complete visual testing workflow example.

Creates baselines on first run, compares on subsequent runs.

"""

from selenium import webdriver

from visualguard import VisualGuard, MaskRegion

import os

def run_visual_tests():

driver = webdriver.Chrome()

guard = VisualGuard(baseline_dir="./baselines", threshold=0.95)

test_cases = [

{

"name": "google_homepage",

"url": "https://www.google.com",

"masks": [

MaskRegion(name="doodle", selector=".lnXdpd"), # Google Doodle changes

]

},

{

"name": "example_domain",

"url": "https://example.com",

"masks": []

}

]

results = []

for test in test_cases:

driver.get(test["url"])

# Wait for page to stabilize

import time

time.sleep(2)

# Capture

guard.capture(test["name"], driver)

# Compare

result = guard.compare(test["name"], masks=test["masks"])

results.append({

"name": test["name"],

"passed": result.passed,

"similarity": result.similarity

})

# Save diff image if failed

if not result.passed:

os.makedirs("./diffs", exist_ok=True)

result.save_diff_image(f"./diffs/{test['name']}_diff.png")

driver.quit()

# Print summary

print("\n" + "="*50)

print("VISUAL TEST RESULTS")

print("="*50)

for r in results:

status = "✅ PASS" if r["passed"] else "❌ FAIL"

print(f"{status} | {r['name']} | Similarity: {r['similarity']:.2%}")

passed = sum(1 for r in results if r["passed"])

print(f"\nTotal: {passed}/{len(results)} passed")

if __name__ == "__main__":

run_visual_tests()

| Concept | Key Takeaway |

|---|---|

| Functional vs Visual | Functional tests miss layout/style bugs |

| Pixel comparison | Too brittle, constant false positives |

| SSIM | Compares structure, matches human perception |

| pHash | Creates fingerprint, tolerates minor changes |

| Region masking | Handle dynamic content areas |

| Multi-browser | Test across browsers and viewports |

"Every second spent logging in is a second not spent testing."

Authentication in enterprise applications typically involves: 1. Loading the login page 2. Entering credentials 3. Submitting the form 4. Waiting for MFA/2FA (if applicable) 5. Handling redirects 6. Waiting for dashboard to load

Average time: 8-15 seconds per login.

For a test suite with 500 tests, each starting with a fresh login: - 500 tests × 10 seconds = 83 minutes just on authentication - That's 23% of a 6-hour test run spent logging in

Best practices dictate that tests should be isolated. Each test should: - Start from a known state - Not depend on previous tests - Clean up after itself

This usually means: fresh browser session per test = login per test.

Before we can skip login, we need to understand what "being logged in" means at a technical level.

┌─────────────────────────────────────────────────────────────────┐

│ LOGIN PROCESS BREAKDOWN │

├─────────────────────────────────────────────────────────────────┤

│ │

│ 1. USER SUBMITS CREDENTIALS │

│ POST /auth/login │

│ Body: { username: "user@example.com", password: "***" } │

│ │

│ 2. SERVER VALIDATES & GENERATES SESSION │

│ • Checks credentials against database │

│ • Creates session record (server-side) │

│ • Generates tokens (JWT, session ID, etc.) │

│ │

│ 3. SERVER SENDS AUTHENTICATION DATA TO BROWSER │

│ Set-Cookie: session_id=abc123; HttpOnly; Secure │

│ Set-Cookie: csrf_token=xyz789; SameSite=Strict │

│ Response: { access_token: "eyJ...", user: {...} } │

│ │

│ 4. BROWSER STORES AUTHENTICATION DATA │

│ ┌─────────────────────────────────────────────────────┐ │

│ │ COOKIES │ SESSION STORAGE │ LOCAL STORAGE │ │

│ │ session_id │ temp_data │ user_settings │ │

│ │ csrf_token │ form_state │ access_token │ │

│ │ remember_me │ │ refresh_token │ │

│ │ preferences │ │ user_profile │ │

│ └─────────────────────────────────────────────────────┘ │

│ │

│ 5. SUBSEQUENT REQUESTS INCLUDE AUTH DATA │

│ Cookie: session_id=abc123; csrf_token=xyz789 │

│ Authorization: Bearer eyJ... │

│ │

└─────────────────────────────────────────────────────────────────┘

| Storage Type | Persists After Close? | Accessible By | Size Limit |

|---|---|---|---|

| Cookies | Depends on Expires |

Server + JavaScript | ~4KB per cookie |

| LocalStorage | Yes | JavaScript only | ~5MB |

| SessionStorage | No (tab-scoped) | JavaScript only | ~5MB |

| IndexedDB | Yes | JavaScript only | Varies (large) |

Key Insight: Different apps store auth tokens in different places. Some use cookies, some use localStorage, some use both.

# DON'T DO THIS

@pytest.fixture(scope="session")

def authenticated_driver():

driver = webdriver.Chrome()

login(driver)

yield driver

driver.quit()

Problems: - Tests aren't isolated - One test's actions affect subsequent tests - Cookie expiration causes cascading failures - State pollution is hard to debug

def fast_login(driver, username, password):

# Get auth token via API (bypasses UI)

response = requests.post(

"https://api.example.com/auth/login",

json={"username": username, "password": password}

)

token = response.json()["token"]

# Navigate first (required for cookie domain)

driver.get("https://app.example.com")

# Set cookie

driver.add_cookie({

"name": "auth_token",

"value": token,

"domain": "app.example.com"

})

driver.refresh()

Improvements: - Much faster than UI login - Bypasses MFA in some cases

Remaining Problems: - Only works if API endpoint exists and is accessible - Single cookie may not be enough (CSRF tokens, etc.) - LocalStorage/SessionStorage not populated - Application-specific initialization not triggered

Selenium Teleport captures the complete browser state after a successful login and restores it instantly in future sessions.

{

"captured_at": "2024-06-15T10:30:00Z",

"url": "https://app.example.com/dashboard",

"cookies": [

{"name": "session_id", "value": "abc123", "domain": ".example.com", ...},

{"name": "csrf_token", "value": "xyz789", "domain": "app.example.com", ...},

{"name": "_ga", "value": "GA1.2...", "domain": ".example.com", ...}

],

"local_storage": {

"user_profile": "{\"id\": 123, \"name\": \"Test User\"}",

"access_token": "eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...",

"app_settings": "{\"theme\": \"dark\", \"notifications\": true}"

},

"session_storage": {

"current_page_state": "{\"tab\": \"dashboard\", \"filters\": []}",

"form_draft": null

}

}

pip install selenium-teleport

from selenium import webdriver

from selenium_teleport import BrowserState

# Step 1: Perform login (one-time setup)

driver = webdriver.Chrome()

driver.get("https://app.example.com/login")

# Option A: Manual login

input("Log in manually, then press Enter...")

# Option B: Automated login

driver.find_element(By.ID, "email").send_keys("user@example.com")

driver.find_element(By.ID, "password").send_keys("secure_password")

driver.find_element(By.ID, "login-btn").click()

# Wait for complete authentication

WebDriverWait(driver, 30).until(

EC.presence_of_element_located((By.ID, "dashboard"))

)

# Step 2: Capture the authenticated state

state = BrowserState.capture(driver)

# Step 3: Save to file for reuse

state.save("./states/admin_user.json")

print(f"✓ Captured {len(state.cookies)} cookies")

print(f"✓ Captured {len(state.local_storage)} localStorage items")

print(f"✓ Captured {len(state.session_storage)} sessionStorage items")

driver.quit()

from selenium import webdriver

from selenium_teleport import BrowserState

@pytest.fixture

def authenticated_driver():

driver = webdriver.Chrome()

# CRITICAL: Navigate to the domain first

# Browsers enforce Same-Origin Policy for cookies

driver.get("https://app.example.com")

# Restore the captured state

state = BrowserState.load("./states/admin_user.json")

state.restore(driver)

# Refresh to apply state

driver.refresh()

yield driver

driver.quit()

def test_dashboard_loads(authenticated_driver):

# Already logged in! No login steps needed

assert "Dashboard" in authenticated_driver.title

def test_user_can_view_settings(authenticated_driver):

authenticated_driver.get("https://app.example.com/settings")

assert authenticated_driver.find_element(By.ID, "settings-form").is_displayed()

Browsers prevent setting cookies for domains you haven't visited. This is a security feature called the Same-Origin Policy.

The Problem:

driver = webdriver.Chrome()

# Current URL is about:blank

driver.add_cookie({"name": "token", "value": "abc", "domain": "app.example.com"})

# Silently fails! No error, but cookie isn't set

Selenium Teleport's Solution:

def restore(self, driver):

# Group cookies by domain

domains = set(c["domain"] for c in self.cookies)

for domain in domains:

# Navigate to each domain first

clean_domain = domain.lstrip(".")

driver.get(f"https://{clean_domain}")

# Now cookies for this domain can be set

domain_cookies = [c for c in self.cookies if c["domain"] == domain]

for cookie in domain_cookies:

try:

# Remove unsupported keys that Selenium doesn't accept

safe_cookie = {k: v for k, v in cookie.items()

if k in ["name", "value", "domain", "path",

"secure", "httpOnly", "expiry", "sameSite"]}

driver.add_cookie(safe_cookie)

except Exception as e:

logging.warning(f"Failed to set cookie {cookie['name']}: {e}")

For tests that need different user roles:

from selenium_teleport import StateManager

# Setup: Capture states for different user roles

manager = StateManager(states_dir="./states")

# (Run once for each user type)

# manager.capture("admin_user", driver)

# manager.capture("standard_user", driver)

# manager.capture("readonly_user", driver)

# In tests: Parameterized fixture

@pytest.fixture(params=["admin_user", "standard_user"])

def user_driver(request):

driver = webdriver.Chrome()

driver.get("https://app.example.com")

manager = StateManager(states_dir="./states")

manager.restore(request.param, driver)

driver.refresh()

yield driver, request.param

driver.quit()

def test_permission_based_access(user_driver):

driver, user_type = user_driver

# Navigate to admin page

driver.get("https://app.example.com/admin")

if user_type == "admin_user":

assert driver.find_element(By.ID, "admin-panel").is_displayed()

else:

# Standard users should see "Access Denied"

assert "Access Denied" in driver.page_source

States don't last forever—sessions expire, tokens become invalid.

from selenium_teleport import BrowserState

from datetime import datetime, timedelta

state = BrowserState.load("./states/admin_user.json")

# Check if state is stale

if state.captured_at < datetime.now() - timedelta(hours=24):

print("⚠️ State is more than 24 hours old - may need refresh")

# Check for expired cookies

expired = [

c["name"] for c in state.cookies

if c.get("expiry") and c["expiry"] < datetime.now().timestamp()

]

if expired:

print(f"⚠️ These cookies have expired: {expired}")

# Automatic freshness check during restore

try:

state.restore(driver, strict=True) # Raises if any cookie expired

except StateExpiredError as e:

print(f"State invalid: {e}")

# Re-authenticate and capture new state

# .github/workflows/refresh-states.yml

name: Refresh Auth States

on:

schedule:

- cron: '0 */6 * * *' # Every 6 hours

workflow_dispatch: # Manual trigger

jobs:

refresh:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Refresh admin state

run: python scripts/refresh_states.py

- name: Upload new states

uses: actions/upload-artifact@v4

with:

name: auth-states

path: states/

| Scenario | Time Per Test | For 500 Tests |

|---|---|---|

| Full UI Login | 10-15s | 83+ minutes |

| API Token + Cookie | 3-5s | 25-40 minutes |

| Selenium Teleport | 0.5-1s | 4-8 minutes |

Total time saved per CI run: 75+ minutes

For a team running CI 10 times/day: 750 minutes (12.5 hours) saved daily

Cookies marked HttpOnly can't be read by JavaScript, but CAN be set via Selenium.

# This works even for HttpOnly cookies

driver.add_cookie({

"name": "session",

"value": "abc123",

"httpOnly": True # Selenium can still set this

})

Secure cookies only work on HTTPS:

# ❌ WRONG: HTTP won't accept Secure cookies

driver.get("http://app.example.com")

driver.add_cookie({"name": "token", "secure": True}) # Silently fails

# ✅ CORRECT: Use HTTPS

driver.get("https://app.example.com")

driver.add_cookie({"name": "token", "secure": True}) # Works

Modern browsers enforce SameSite attribute:

# SameSite=Strict cookies won't be sent on cross-site navigation

# Make sure you're on the correct domain when setting

driver.get("https://app.example.com") # Navigate to same site

driver.add_cookie({

"name": "csrf",

"value": "token123",

"sameSite": "Strict"

})

"""

Complete Selenium Teleport workflow.

Run this once to capture state, then use in tests.

"""

from selenium import webdriver

from selenium_teleport import BrowserState, StateManager

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

import os

def capture_state_demo():

"""Demo: Capture state from Google (no login, just session)"""

driver = webdriver.Chrome()

driver.get("https://www.google.com")

# Interact to generate some state

search_box = driver.find_element(By.NAME, "q")

search_box.send_keys("Selenium Teleport")

search_box.submit()

# Wait for results

WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.ID, "search"))

)

# Capture state

state = BrowserState.capture(driver)

# Save

os.makedirs("./demo_states", exist_ok=True)

state.save("./demo_states/google_session.json")

print(f"✓ Captured state from URL: {driver.current_url}")

print(f" Cookies: {len(state.cookies)}")

print(f" LocalStorage items: {len(state.local_storage)}")

driver.quit()

return state

def restore_state_demo():

"""Demo: Restore the captured state"""

driver = webdriver.Chrome()

# Must navigate to domain first

driver.get("https://www.google.com")

# Load and restore state

state = BrowserState.load("./demo_states/google_session.json")

state.restore(driver)

# Verify restoration

cookies_count = len(driver.get_cookies())

print(f"✓ Restored {cookies_count} cookies")

driver.quit()

if __name__ == "__main__":

print("Step 1: Capturing state...")

capture_state_demo()

print("\nStep 2: Restoring state...")

restore_state_demo()

print("\n✅ Teleport demo complete!")

| Concept | Key Takeaway |

|---|---|

| Auth Cost | Login can consume 20-40% of test execution time |

| Browser State | Auth stored in cookies, localStorage, sessionStorage |

| Same-Origin Policy | Must navigate to domain before setting cookies |

| Selenium Teleport | Capture and restore complete auth state |

| Multi-User | Manage different user role states for comprehensive testing |

| Freshness | States expire; automate refresh in CI |

"When the website thinks you're a bot, you can't test anything."

Modern bot detection has evolved far beyond simple CAPTCHAs. Services like Cloudflare, PerimeterX, DataDome, and Akamai use sophisticated fingerprinting that can detect standard Selenium in milliseconds.

┌─────────────────────────────────────────────────────────────────┐

│ BOT DETECTION LAYERS │

├─────────────────────────────────────────────────────────────────┤

│ │

│ LAYER 1: BROWSER FINGERPRINT │

│ Is this a real browser? Does it have all the expected APIs? │

│ • navigator.webdriver flag │

│ • WebGL renderer/vendor │

│ • Canvas fingerprint │

│ • Plugin list │

│ • Screen resolution consistency │

│ │

│ LAYER 2: BEHAVIORAL ANALYSIS │

│ Does this user act human? │

│ • Mouse movement patterns │

│ • Keyboard timing │

│ • Scroll behavior │

│ • Time to first interaction │

│ │

│ LAYER 3: NETWORK SIGNALS │

│ Does the traffic look normal? │

│ • IP reputation │

│ • TLS fingerprint (JA3) │

│ • HTTP header order │

│ • Request timing patterns │

│ │

│ LAYER 4: CHALLENGE-RESPONSE │

│ Can they solve puzzles? │

│ • Invisible reCAPTCHA │

│ • Cloudflare Turnstile │

│ • Proof-of-work challenges │

│ │

└─────────────────────────────────────────────────────────────────┘

// What detection services check first

console.log(navigator.webdriver); // true = Selenium!

// Standard Selenium Chrome sets this to true

// It's a W3C standard for automation detection

Why it exists: The WebDriver spec requires browsers to expose when they're being automated. This is a security feature, not a bug.

// Every GPU produces slightly different rendering

const canvas = document.createElement('canvas');

const gl = canvas.getContext('webgl');

const fingerprint = {

vendor: gl.getParameter(gl.VENDOR),

renderer: gl.getParameter(gl.RENDERER),

extensions: gl.getSupportedExtensions()

};

// Real browser: "Intel Inc." / "Intel Iris OpenGL Engine"

// Headless: Often "Google SwiftShader" or empty

// Same canvas operations produce different pixels per system

const canvas = document.createElement('canvas');

const ctx = canvas.getContext('2d');

ctx.textBaseline = 'top';

ctx.font = '14px Arial';

ctx.fillText('fingerprint', 2, 2);

const hash = canvas.toDataURL().hashCode();

// Hash is unique per browser/OS/GPU combination

// Real browsers have plugins

console.log(navigator.plugins.length);

// Real Chrome: 3-5 plugins (PDF Viewer, etc.)

// Headless: 0 plugins

// Plugin details also checked

for (let plugin of navigator.plugins) {

console.log(plugin.name, plugin.filename);

}

# Standard Selenium Chrome instance

driver = webdriver.Chrome()

# Detection services see:

# ✗ navigator.webdriver = true

# ✗ No WebGL or suspicious WebGL

# ✗ No plugins

# ✗ Suspicious browser automation flags in Chrome args

# ✗ Inhuman interaction speed (clicks happen instantly)

# ✗ No mouse movement between clicks

# Result: Blocked immediately

Even if you fool the fingerprint checks, behavior gives you away:

// Real users have natural mouse paths

document.addEventListener('mousemove', e => {

recordMovement(e.clientX, e.clientY, Date.now());

});

// Analysis:

// Human: Curved paths, acceleration/deceleration, micro-movements

// Bot: No movements, or perfect straight lines between clicks

// Real users have variable typing speed

document.addEventListener('keydown', e => {

recordKeyTiming(e.key, Date.now());

});

// Analysis:

// Human: Variable delays (50-300ms), some keys faster (home row)

// Bot: Perfect consistent timing, or all keys instant

| Metric | Human | Bot |

|---|---|---|

| Time on page before first click | 2-10 seconds | < 500ms |

| Mouse movement before click | Curved path | None or straight |

| Click position | Slightly random | Exact center |

| Double-click frequency | Occasional | Never or always |

SB Stealth Wrapper (StealthAutomation) builds on SeleniumBase to provide multi-layered stealth:

┌─────────────────────────────────────────────────────────────────┐

│ STEALTH ARCHITECTURE │

├─────────────────────────────────────────────────────────────────┤

│ │

│ LAYER 1: ENVIRONMENT DETECTION │

│ ┌─────────────────────────────────────────────────────────┐ │

│ │ • Detects CI vs Local development │ │

│ │ • Configures display (Xvfb on Linux headless) │ │

│ │ • Sets appropriate headless mode │ │

│ │ • Handles Docker/container environments │ │

│ └─────────────────────────────────────────────────────────┘ │

│ │

│ LAYER 2: BROWSER FINGERPRINT SPOOFING │

│ ┌─────────────────────────────────────────────────────────┐ │

│ │ • Patches navigator.webdriver → undefined │ │

│ │ • Injects consistent WebGL fingerprint │ │

│ │ • Adds realistic plugins list │ │

│ │ • Consistent user-agent across all signals │ │

│ │ • Spoofs hardware concurrency and device memory │ │

│ └─────────────────────────────────────────────────────────┘ │

│ │

│ LAYER 3: HUMAN-LIKE BEHAVIOR │

│ ┌─────────────────────────────────────────────────────────┐ │

│ │ • Random micro-delays between actions │ │

│ │ • Simulated mouse movement paths │ │

│ │ • Realistic typing patterns with variable speed │ │

│ │ • Random scroll behavior │ │

│ └─────────────────────────────────────────────────────────┘ │

│ │

│ LAYER 4: NETWORK NORMALIZATION │

│ ┌─────────────────────────────────────────────────────────┐ │

│ │ • Standard TLS configuration │ │

│ │ • Normal HTTP header ordering │ │

│ │ • Proxy rotation support │ │

│ └─────────────────────────────────────────────────────────┘ │

│ │

└─────────────────────────────────────────────────────────────────┘

pip install stealthautomation

from stealthautomation import StealthBrowser

# Automatic environment configuration

with StealthBrowser() as sb:

# Navigate with human-like behavior

sb.open("https://protected-site.com")

# Interactions automatically include human patterns

sb.type("#email", "user@example.com") # Types with realistic timing

sb.type("#password", "secret")

sb.click("#login-button") # Includes mouse movement

# Assertions work normally

sb.assert_element("#dashboard")

from stealthautomation import StealthBrowser, StealthConfig

config = StealthConfig(

# Headless options

headless=True, # True for CI, False for debugging

headless_mode="new", # Chrome's new headless (less detectable)

# Display configuration (Linux CI)

use_virtual_display=True, # Use Xvfb

display_width=1920,

display_height=1080,

# Fingerprint spoofing

webgl_vendor="Intel Inc.",

webgl_renderer="Intel Iris OpenGL Engine",

user_agent="Mozilla/5.0 (Windows NT 10.0; Win64; x64)...",

# Behavior configuration

human_delay_min=0.1, # Minimum delay between actions (seconds)

human_delay_max=0.3, # Maximum delay

enable_mouse_movement=True, # Simulate mouse paths

typing_speed_variance=0.3, # 30% variance in typing speed

)

with StealthBrowser(config=config) as sb:

sb.open("https://protected-site.com")

from stealthautomation import StealthBrowser

with StealthBrowser() as sb:

sb.open("https://site-with-cloudflare.com")

# Stealth wrapper handles the challenge automatically

# by appearing as a real browser

# Wait for any challenge to complete

sb.wait_for_element("#main-content", timeout=30)

# Continue with normal testing

sb.assert_text_visible("Welcome")

# reCAPTCHA v3 scores behavior, not puzzles

# Stealth mode increases score by acting human

with StealthBrowser() as sb:

sb.open("https://site-with-recaptcha.com")

# Spend time on page like a real user

sb.scroll_down()

time.sleep(2)

sb.move_mouse_to_element("#some-section")

# Now interact

sb.click("#protected-button")

name: Stealth Tests

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.11'

- name: Install dependencies

run: |

pip install stealthautomation pytest

- name: Install Chrome

run: |

wget -q https://dl.google.com/linux/direct/google-chrome-stable_current_amd64.deb

sudo apt-get install ./google-chrome-stable_current_amd64.deb

- name: Install Xvfb (virtual display)

run: sudo apt-get install -y xvfb

- name: Run tests

run: |

# Start virtual display

Xvfb :99 -screen 0 1920x1080x24 &

export DISPLAY=:99

# Run tests

pytest tests/ -v

from stealthautomation import StealthBrowser, check_detection

with StealthBrowser() as sb:

# Check what detection services might see

detection_report = check_detection(sb)

print("=== Detection Report ===")

print(f"WebDriver flag visible: {detection_report['webdriver_visible']}")

print(f"Plugins detected: {detection_report['plugins_count']}")

print(f"WebGL available: {detection_report['webgl_available']}")

print(f"Canvas fingerprint: {detection_report['canvas_hash'][:20]}...")

# Test against known detection sites

sb.open("https://bot.sannysoft.com")

sb.save_screenshot("bot_test.png")

sb.open("https://browserleaks.com/javascript")

sb.save_screenshot("browserleaks_test.png")

Important: Stealth automation should only be used for: - ✅ Testing your own applications - ✅ Testing applications where you have explicit permission - ✅ QA environments with security controls similar to production

Not for: - ❌ Scraping data without permission - ❌ Bypassing rate limits on third-party services - ❌ Any malicious purposes

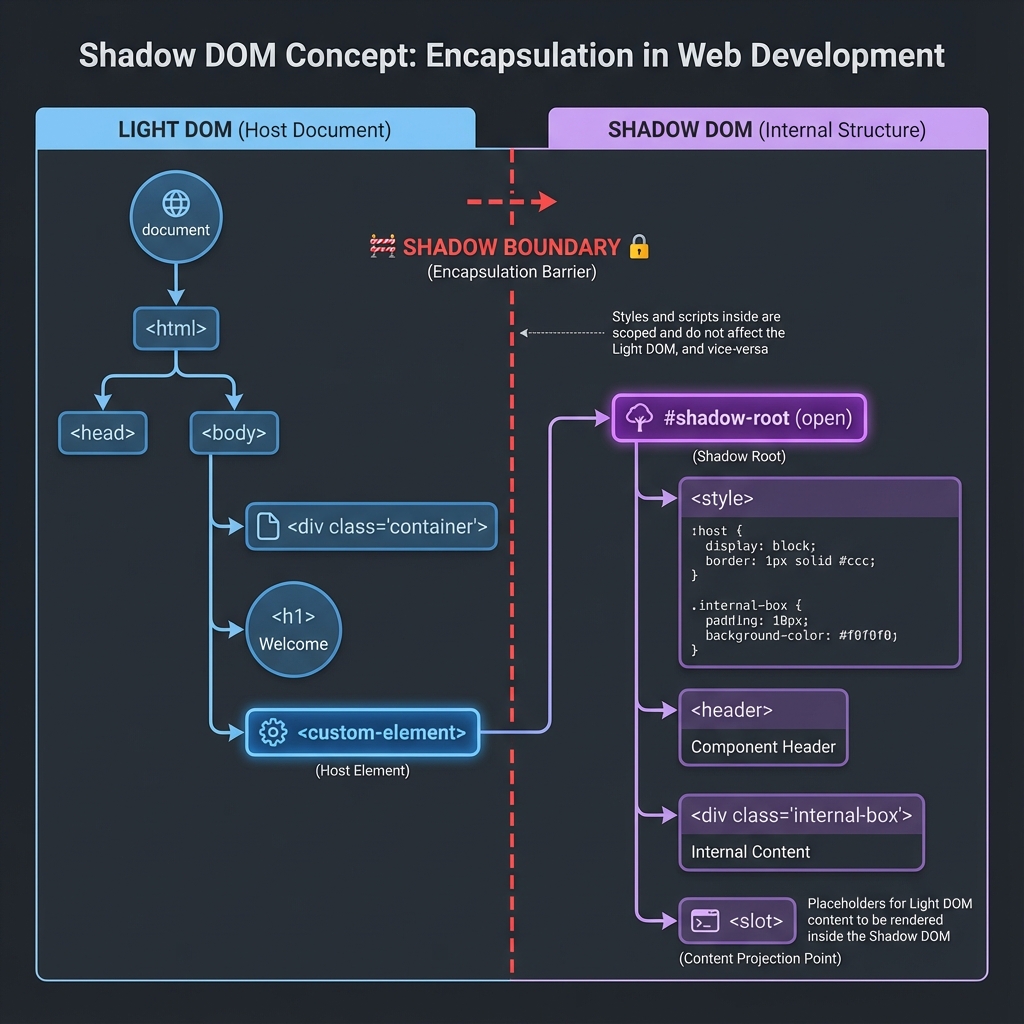

"The shadow boundary is a wall—but every wall has a door."

Shadow DOM is a web standard that enables encapsulation. It creates a separate DOM tree hidden from the main document:

<!-- Main Page (Light DOM) -->

<body>

<h1>Welcome</h1>

<!-- This is a custom element -->

<custom-button id="my-button">

<!-- Shadow boundary starts here -->

#shadow-root (open)

<style>

/* These styles ONLY affect shadow content */

button { background: blue; color: white; }

</style>

<button class="inner-btn">

<slot></slot> <!-- Content projection -->

</button>

<!-- Shadow boundary ends here -->

Click Me <!-- This text goes into the <slot> -->

</custom-button>

</body>

┌─────────────────────────────────────────────────────────────────┐

│ DOCUMENT │

│ ┌───────────────────────────────────────────────────────────┐ │

│ │ LIGHT DOM │ │

│ │ │ │

│ │ <body> │ │

│ │ <h1>Welcome</h1> │ │

│ │ <custom-button id="my-button"> │ │

│ │ ║ │ │

│ │ ║ SHADOW BOUNDARY │ │

│ │ ║ ┌─────────────────────────────────────────────┐ │ │

│ │ ║ │ #shadow-root │ │ │

│ │ ║ │ <style>...</style> │ │ │

│ │ ║ │ <button class="inner-btn"> │ │ │

│ │ ║ │ <slot></slot> │ │ │

│ │ ║ │ </button> │ │ │

│ │ ║ └─────────────────────────────────────────────┘ │ │

│ │ "Click Me" (slotted content) │ │

│ │ </custom-button> │ │

│ │ </body> │ │

│ └───────────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────────┘

/* Without Shadow DOM: Styles leak everywhere */

.btn { background: red; } /* Affects ALL .btn on page */

/* With Shadow DOM: Styles are scoped */

/* Inside shadow: */ .btn { background: blue; }

/* These don't affect each other! */

// Without Shadow DOM:

document.querySelector('.btn'); // Finds ANY .btn

// With Shadow DOM:

document.querySelector('.inner-btn'); // Returns null!

// Button is hidden inside shadow root

The component's internal structure is an implementation detail. Users of the component only see: